Managing Networks and Traffic

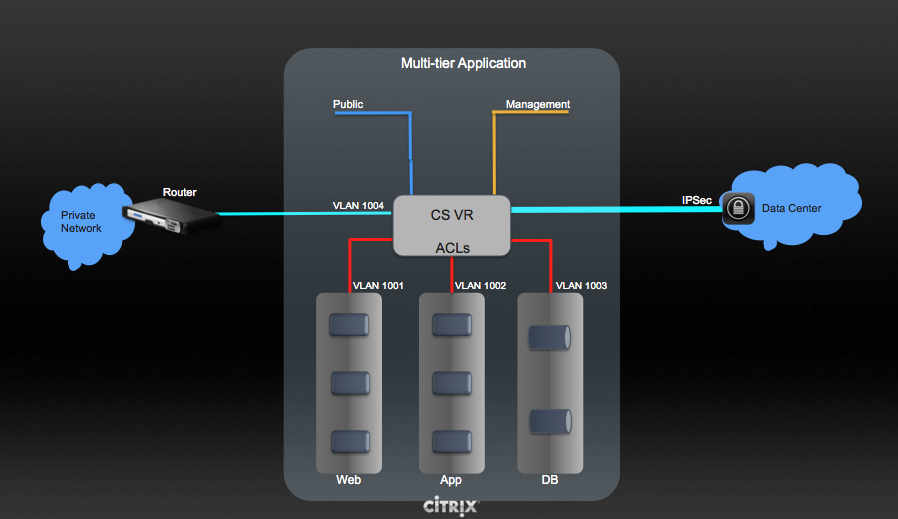

In a CloudStack, guest VMs can communicate with each other using shared

infrastructure with the security and user perception that the guests

have a private LAN. The CloudStack virtual router is the main component

providing networking features for guest traffic.

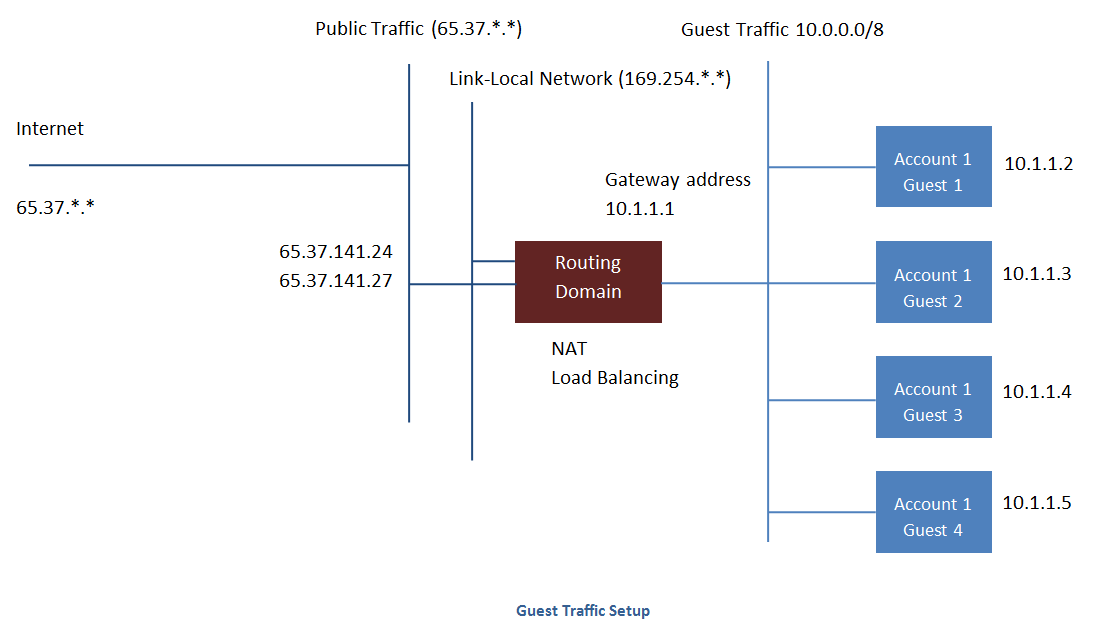

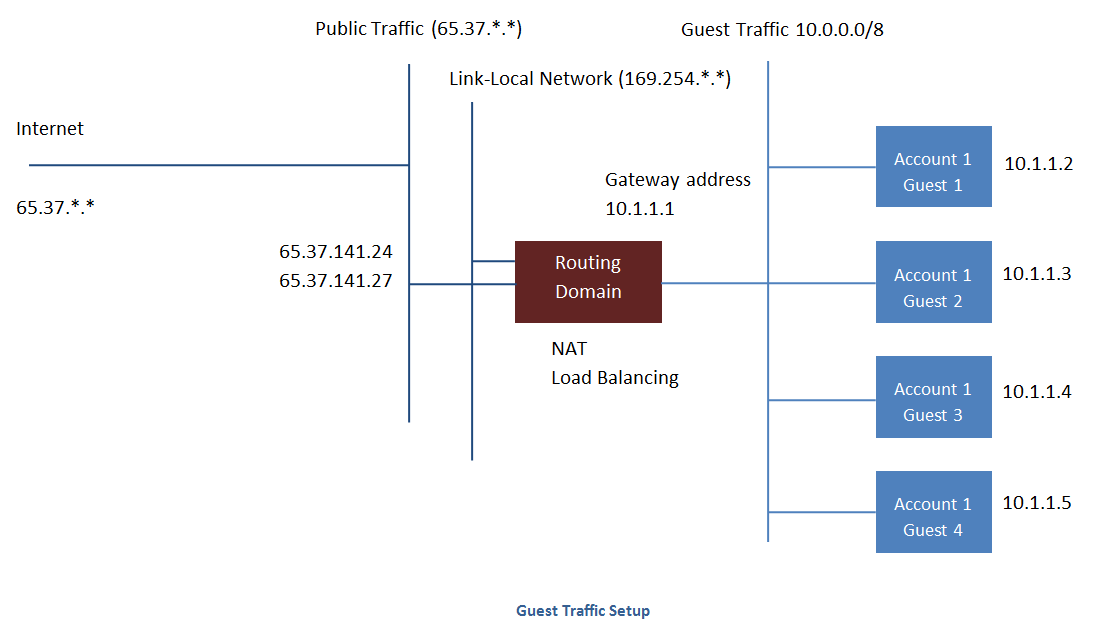

Guest Traffic

A network can carry guest traffic only between VMs within one zone.

Virtual machines in different zones cannot communicate with each other

using their IP addresses; they must communicate with each other by

routing through a public IP address.

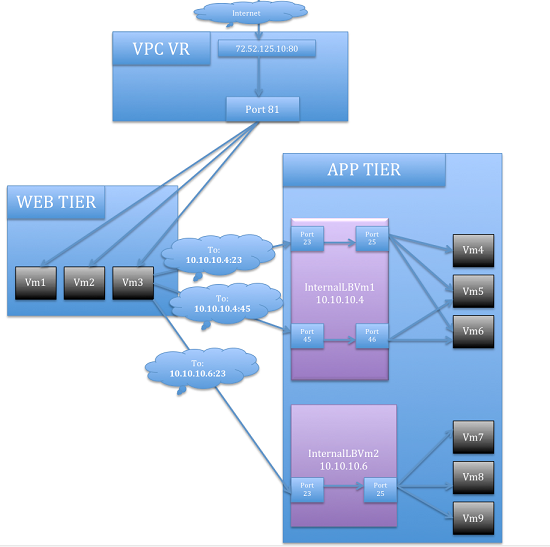

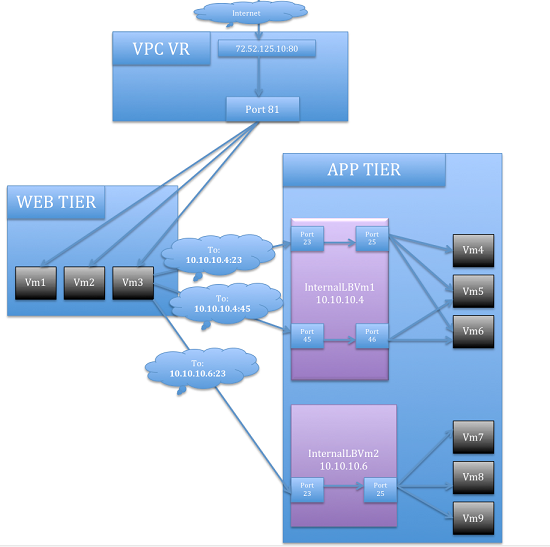

See a typical guest traffic setup given below:

Typically, the Management Server automatically creates a virtual router

for each network. A virtual router is a special virtual machine that

runs on the hosts. Each virtual router in an isolated network has three

network interfaces. If multiple public VLAN is used, the router will

have multiple public interfaces. Its eth0 interface serves as the

gateway for the guest traffic and has the IP address of 10.1.1.1. Its

eth1 interface is used by the system to configure the virtual router.

Its eth2 interface is assigned a public IP address for public traffic.

If multiple public VLAN is used, the router will have multiple public

interfaces.

The virtual router provides DHCP and will automatically assign an IP

address for each guest VM within the IP range assigned for the network.

The user can manually reconfigure guest VMs to assume different IP

addresses.

Source NAT is automatically configured in the virtual router to forward

outbound traffic for all guest VMs

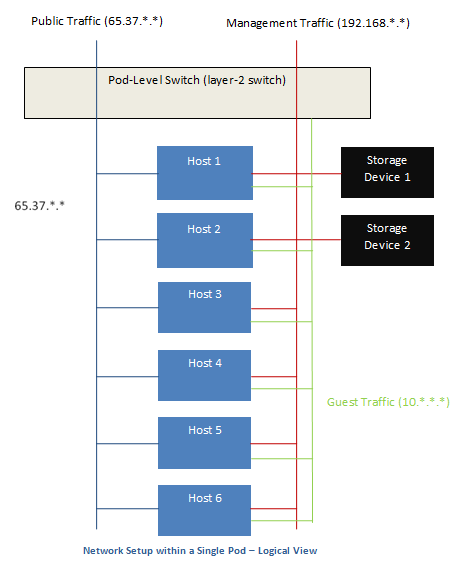

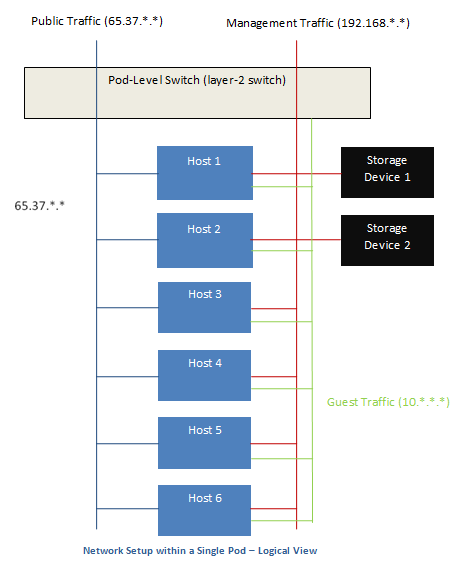

Networking in a Pod

The figure below illustrates network setup within a single pod. The

hosts are connected to a pod-level switch. At a minimum, the hosts

should have one physical uplink to each switch. Bonded NICs are

supported as well. The pod-level switch is a pair of redundant gigabit

switches with 10 G uplinks.

Servers are connected as follows:

- Storage devices are connected to only the network that carries

management traffic.

- Hosts are connected to networks for both management traffic and

public traffic.

- Hosts are also connected to one or more networks carrying guest

traffic.

We recommend the use of multiple physical Ethernet cards to implement

each network interface as well as redundant switch fabric in order to

maximize throughput and improve reliability.

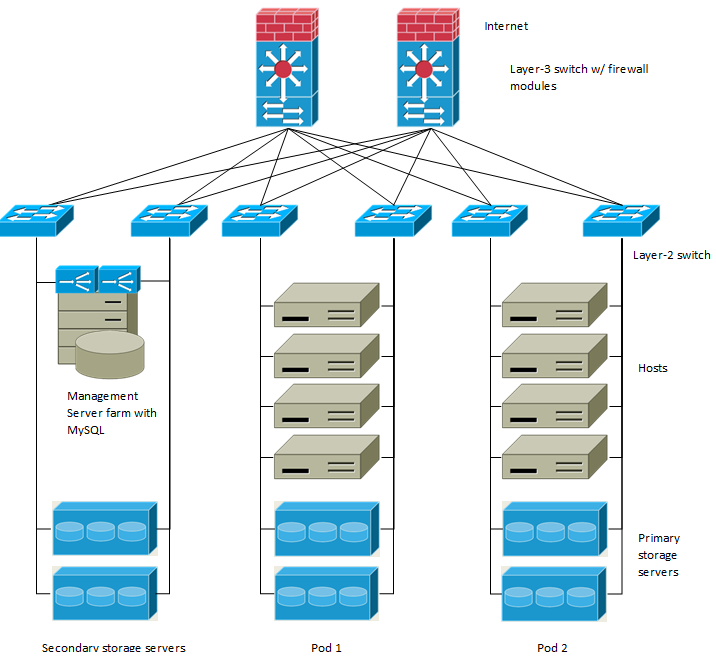

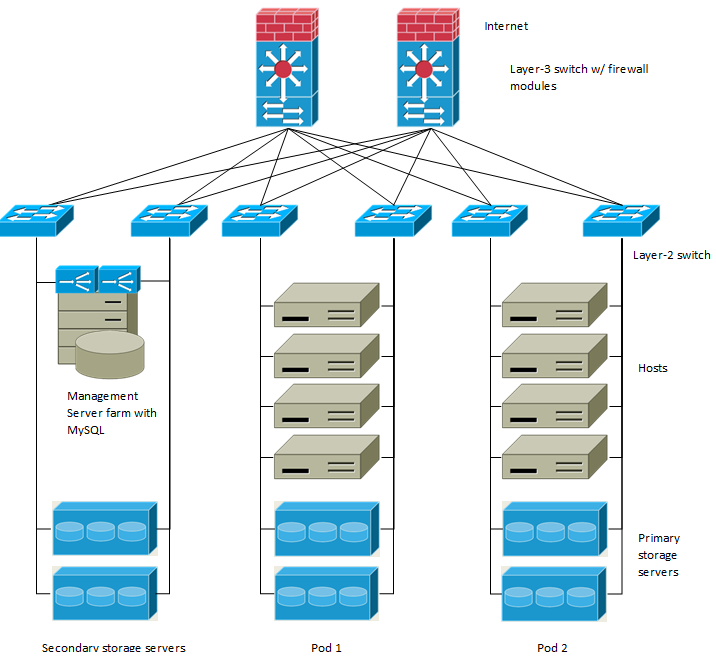

Networking in a Zone

The following figure illustrates the network setup within a single zone.

A firewall for management traffic operates in the NAT mode. The network

typically is assigned IP addresses in the 192.168.0.0/16 Class B private

address space. Each pod is assigned IP addresses in the 192.168.*.0/24

Class C private address space.

Each zone has its own set of public IP addresses. Public IP addresses

from different zones do not overlap.

Basic Zone Physical Network Configuration

In a basic network, configuring the physical network is fairly

straightforward. You only need to configure one guest network to carry

traffic that is generated by guest VMs. When you first add a zone to

CloudStack, you set up the guest network through the Add Zone screens.

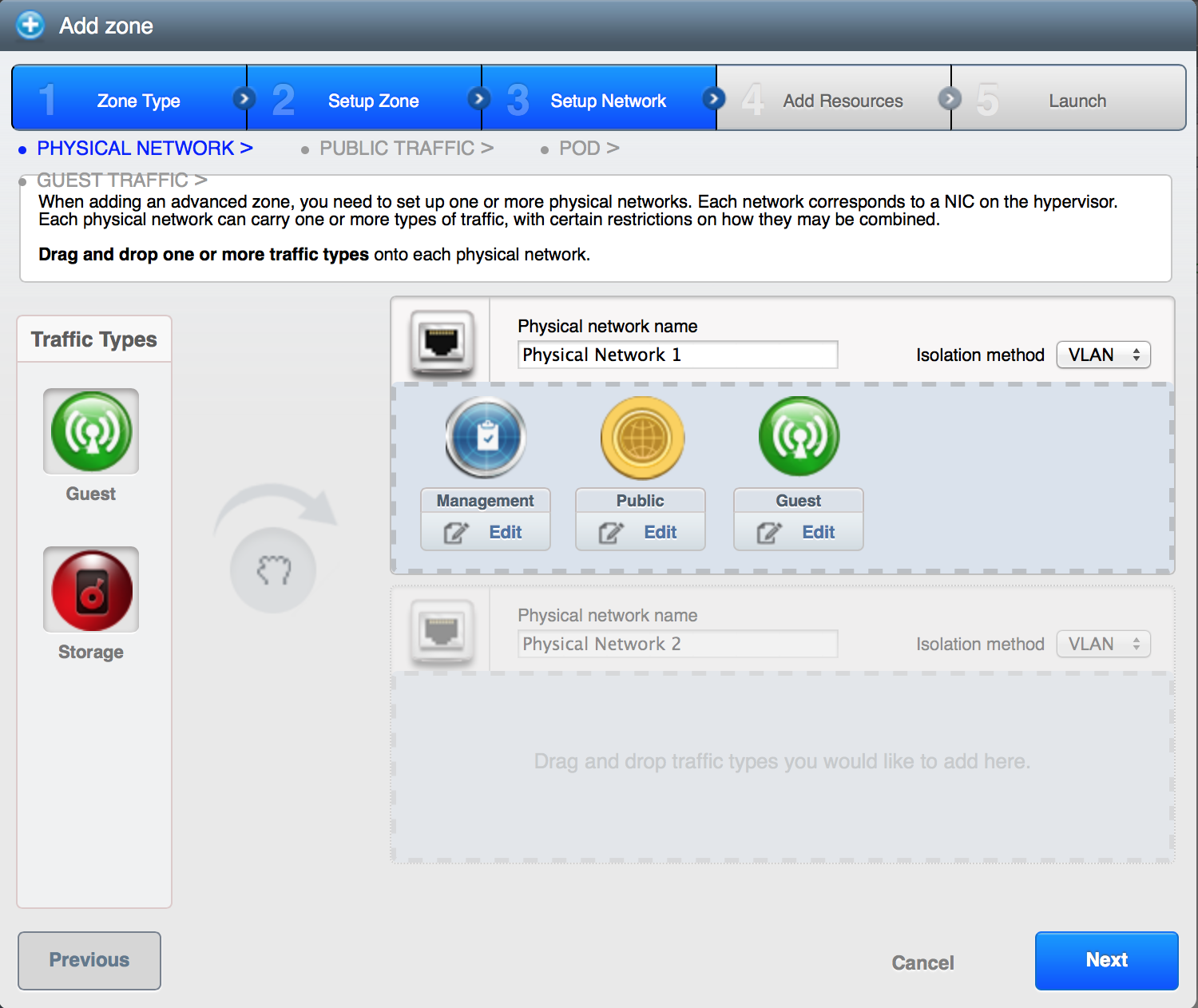

Advanced Zone Physical Network Configuration

Within a zone that uses advanced networking, you need to tell the

Management Server how the physical network is set up to carry different

kinds of traffic in isolation.

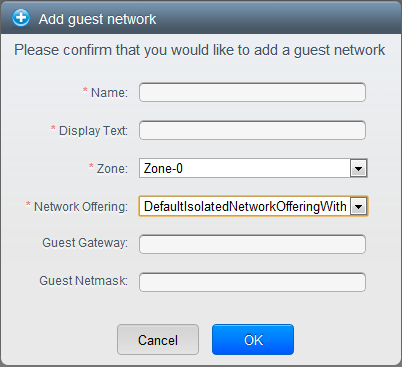

Configuring a Shared Guest Network

Log in to the CloudStack UI as administrator.

In the left navigation, choose Infrastructure.

On Zones, click View More.

Click the zone to which you want to add a guest network.

Click the Physical Network tab.

Click the physical network you want to work with.

On the Guest node of the diagram, click Configure.

Click the Network tab.

Click Add guest network.

The Add guest network window is displayed.

Specify the following:

Name: The name of the network. This will be visible to the user.

Description: The short description of the network that can be

displayed to users.

VLAN ID: The unique ID of the VLAN.

Isolated VLAN ID: The unique ID of the Secondary Isolated

VLAN.

Scope: The available scopes are Domain, Account, Project, and

All.

- Domain: Selecting Domain limits the scope of this guest

network to the domain you specify. The network will not be

available for other domains. If you select Subdomain Access,

the guest network is available to all the sub domains within

the selected domain.

- Account: The account for which the guest network is being

created for. You must specify the domain the account belongs

to.

- Project: The project for which the guest network is being

created for. You must specify the domain the project belongs

to.

- All: The guest network is available for all the domains,

account, projects within the selected zone.

Network Offering: If the administrator has configured multiple

network offerings, select the one you want to use for this

network.

Gateway: The gateway that the guests should use.

Netmask: The netmask in use on the subnet the guests will use.

IP Range: A range of IP addresses that are accessible from the

Internet and are assigned to the guest VMs.

If one NIC is used, these IPs should be in the same CIDR in the

case of IPv6.

IPv6 CIDR: The network prefix that defines the guest network

subnet. This is the CIDR that describes the IPv6 addresses in use

in the guest networks in this zone. To allot IP addresses from

within a particular address block, enter a CIDR.

Network Domain: A custom DNS suffix at the level of a network.

If you want to assign a special domain name to the guest VM

network, specify a DNS suffix.

Click OK to confirm.

Using Multiple Guest Networks

In zones that use advanced networking, additional networks for guest

traffic may be added at any time after the initial installation. You can

also customize the domain name associated with the network by specifying

a DNS suffix for each network.

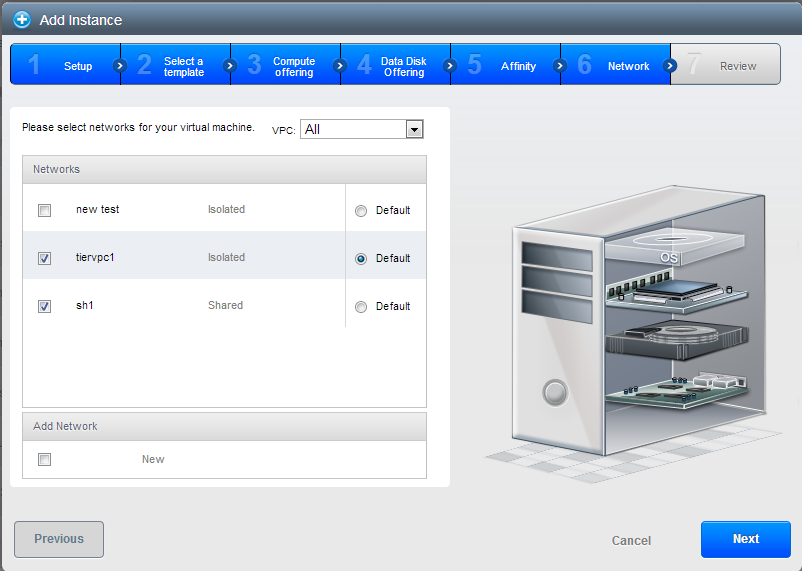

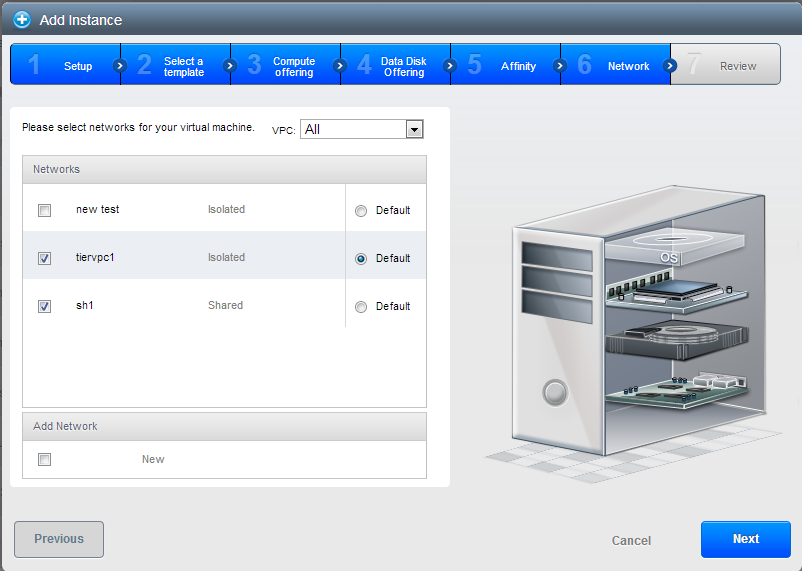

A VM’s networks are defined at VM creation time. A VM cannot add or

remove networks after it has been created, although the user can go into

the guest and remove the IP address from the NIC on a particular

network.

Each VM has just one default network. The virtual router’s DHCP reply

will set the guest’s default gateway as that for the default network.

Multiple non-default networks may be added to a guest in addition to the

single, required default network. The administrator can control which

networks are available as the default network.

Additional networks can either be available to all accounts or be

assigned to a specific account. Networks that are available to all

accounts are zone-wide. Any user with access to the zone can create a VM

with access to that network. These zone-wide networks provide little or

no isolation between guests.Networks that are assigned to a specific

account provide strong isolation.

Adding an Additional Guest Network

- Log in to the CloudStack UI as an administrator or end user.

- In the left navigation, choose Network.

- Click Add guest network. Provide the following information:

- Name: The name of the network. This will be user-visible.

- Display Text: The description of the network. This will be

user-visible.

- Zone. The name of the zone this network applies to. Each zone

is a broadcast domain, and therefore each zone has a different IP

range for the guest network. The administrator must configure the

IP range for each zone.

- Network offering: If the administrator has configured multiple

network offerings, select the one you want to use for this

network.

- Guest Gateway: The gateway that the guests should use.

- Guest Netmask: The netmask in use on the subnet the guests

will use.

- Click Create.

Reconfiguring Networks in VMs

CloudStack provides you the ability to move VMs between networks and

reconfigure a VM’s network. You can remove a VM from a network and add

to a new network. You can also change the default network of a virtual

machine. With this functionality, hybrid or traditional server loads can

be accommodated with ease.

This feature is supported on XenServer, VMware, and KVM hypervisors.

Prerequisites

Ensure that vm-tools are running on guest VMs for adding or removing

networks to work on VMware hypervisor.

Adding a Network

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, click Instances.

Choose the VM that you want to work with.

Click the NICs tab.

Click Add network to VM.

The Add network to VM dialog is displayed.

In the drop-down list, select the network that you would like to add

this VM to.

A new NIC is added for this network. You can view the following

details in the NICs page:

- ID

- Network Name

- Type

- IP Address

- Gateway

- Netmask

- Is default

- CIDR (for IPv6)

Removing a Network

- Log in to the CloudStack UI as an administrator or end user.

- In the left navigation, click Instances.

- Choose the VM that you want to work with.

- Click the NICs tab.

- Locate the NIC you want to remove.

- Click Remove NIC button.

- Click Yes to confirm.

Selecting the Default Network

- Log in to the CloudStack UI as an administrator or end user.

- In the left navigation, click Instances.

- Choose the VM that you want to work with.

- Click the NICs tab.

- Locate the NIC you want to work with.

- Click the Set default NIC button.

.

.

- Click Yes to confirm.

Changing the Network Offering on a Guest Network

A user or administrator can change the network offering that is

associated with an existing guest network.

Log in to the CloudStack UI as an administrator or end user.

If you are changing from a network offering that uses the CloudStack

virtual router to one that uses external devices as network service

providers, you must first stop all the VMs on the network.

In the left navigation, choose Network.

Click the name of the network you want to modify.

In the Details tab, click Edit.

In Network Offering, choose the new network offering, then click

Apply.

A prompt is displayed asking whether you want to keep the existing

CIDR. This is to let you know that if you change the network

offering, the CIDR will be affected.

If you upgrade between virtual router as a provider and an external

network device as provider, acknowledge the change of CIDR to

continue, so choose Yes.

Wait for the update to complete. Don’t try to restart VMs until the

network change is complete.

If you stopped any VMs, restart them.

IP Reservation in Isolated Guest Networks

In isolated guest networks, a part of the guest IP address space can be

reserved for non-CloudStack VMs or physical servers. To do so, you

configure a range of Reserved IP addresses by specifying the CIDR when a

guest network is in Implemented state. If your customers wish to have

non-CloudStack controlled VMs or physical servers on the same network,

they can share a part of the IP address space that is primarily provided

to the guest network.

In an Advanced zone, an IP address range or a CIDR is assigned to a

network when the network is defined. The CloudStack virtual router acts

as the DHCP server and uses CIDR for assigning IP addresses to the guest

VMs. If you decide to reserve CIDR for non-CloudStack purposes, you can

specify a part of the IP address range or the CIDR that should only be

allocated by the DHCP service of the virtual router to the guest VMs

created in CloudStack. The remaining IPs in that network are called

Reserved IP Range. When IP reservation is configured, the administrator

can add additional VMs or physical servers that are not part of

CloudStack to the same network and assign them the Reserved IP

addresses. CloudStack guest VMs cannot acquire IPs from the Reserved IP

Range.

IP Reservation Considerations

Consider the following before you reserve an IP range for non-CloudStack

machines:

IP Reservation is supported only in Isolated networks.

IP Reservation can be applied only when the network is in Implemented

state.

No IP Reservation is done by default.

Guest VM CIDR you specify must be a subset of the network CIDR.

Specify a valid Guest VM CIDR. IP Reservation is applied only if no

active IPs exist outside the Guest VM CIDR.

You cannot apply IP Reservation if any VM is alloted with an IP

address that is outside the Guest VM CIDR.

To reset an existing IP Reservation, apply IP reservation by

specifying the value of network CIDR in the CIDR field.

For example, the following table describes three scenarios of guest

network creation:

| Case |

CIDR |

Network CIDR |

Reserved IP Range for Non-CloudStack VMs |

Description |

|---|

| 1 |

10.1.1.0/24 |

None |

None |

No IP Reservation. |

| 2 |

10.1.1.0/26 |

10.1.1.0/24 |

10.1.1.64 to 10.1.1.254 |

IP Reservation configured by the UpdateNetwork API with

guestvmcidr=10.1.1.0/26 or enter 10.1.1.0/26 in the CIDR

field in the UI. |

| 3 |

10.1.1.0/24 |

None |

None |

Removing IP Reservation by the UpdateNetwork API with

guestvmcidr=10.1.1.0/24 or enter 10.1.1.0/24 in the CIDR

field in the UI. |

Limitations

- The IP Reservation is not supported if active IPs that are found

outside the Guest VM CIDR.

- Upgrading network offering which causes a change in CIDR (such as

upgrading an offering with no external devices to one with external

devices) IP Reservation becomes void if any. Reconfigure IP

Reservation in the new re-implemeted network.

Best Practices

Apply IP Reservation to the guest network as soon as the network state

changes to Implemented. If you apply reservation soon after the first

guest VM is deployed, lesser conflicts occurs while applying

reservation.

Reserving an IP Range

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, choose Network.

Click the name of the network you want to modify.

In the Details tab, click Edit.

The CIDR field changes to editable one.

In CIDR, specify the Guest VM CIDR.

Click Apply.

Wait for the update to complete. The Network CIDR and the Reserved IP

Range are displayed on the Details page.

Reserving Public IP Addresses and VLANs for Accounts

CloudStack provides you the ability to reserve a set of public IP

addresses and VLANs exclusively for an account. During zone creation,

you can continue defining a set of VLANs and multiple public IP ranges.

This feature extends the functionality to enable you to dedicate a fixed

set of VLANs and guest IP addresses for a tenant.

Note that if an account has consumed all the VLANs and IPs dedicated to

it, the account can acquire two more resources from the system.

CloudStack provides the root admin with two configuration parameter to

modify this default behavior: use.system.public.ips and

use.system.guest.vlans. These global parameters enable the root admin to

disallow an account from acquiring public IPs and guest VLANs from the

system, if the account has dedicated resources and these dedicated

resources have all been consumed. Both these configurations are

configurable at the account level.

This feature provides you the following capabilities:

Reserve a VLAN range and public IP address range from an Advanced

zone and assign it to an account

Disassociate a VLAN and public IP address range from an account

View the number of public IP addresses allocated to an account

Check whether the required range is available and is conforms to

account limits.

The maximum IPs per account limit cannot be superseded.

Dedicating IP Address Ranges to an Account

Log in to the CloudStack UI as administrator.

In the left navigation bar, click Infrastructure.

In Zones, click View All.

Choose the zone you want to work with.

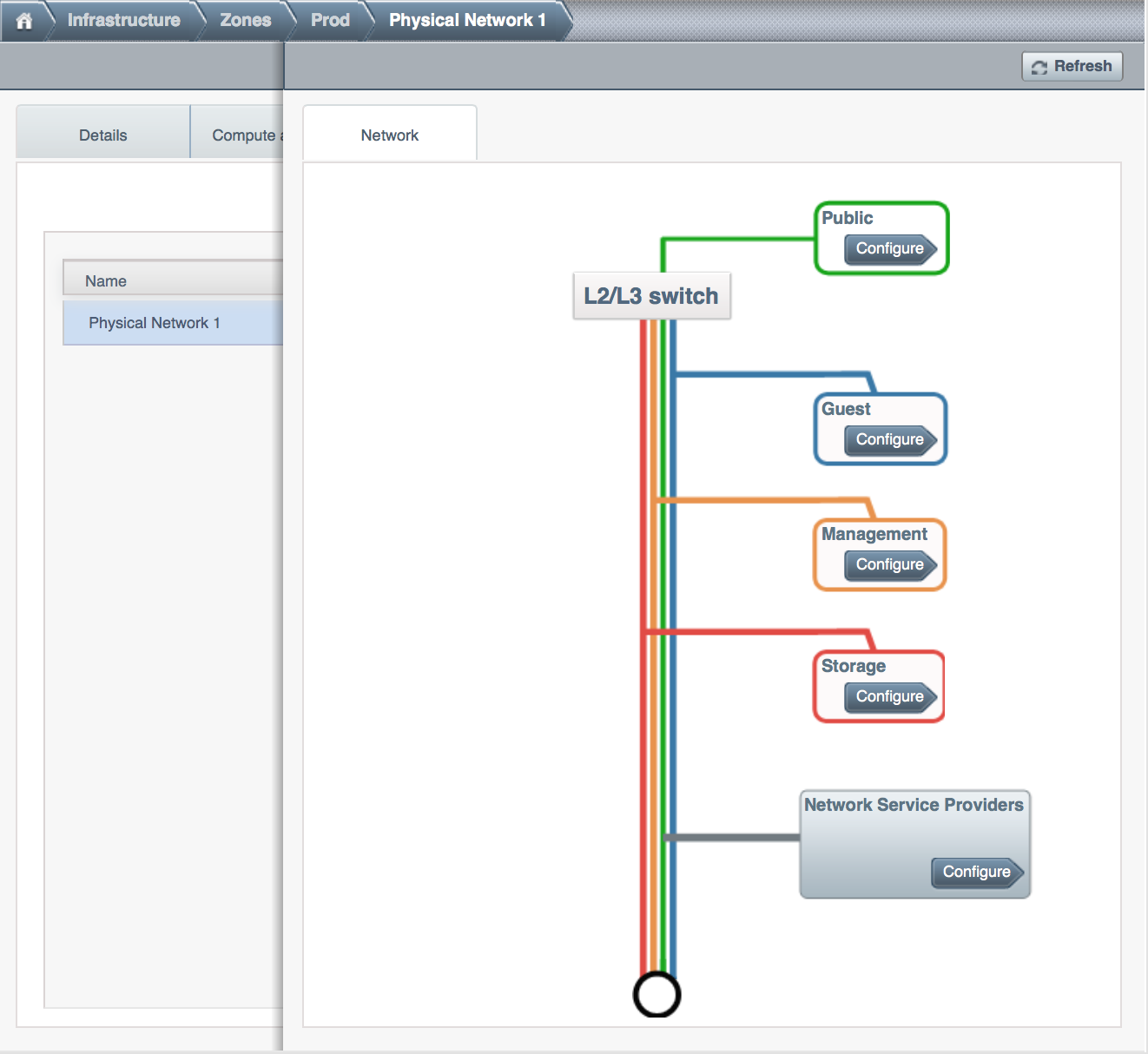

Click the Physical Network tab.

In the Public node of the diagram, click Configure.

Click the IP Ranges tab.

You can either assign an existing IP range to an account, or create a

new IP range and assign to an account.

To assign an existing IP range to an account, perform the following:

Locate the IP range you want to work with.

Click Add Account  button.

button.

The Add Account dialog is displayed.

Specify the following:

- Account: The account to which you want to assign the IP

address range.

- Domain: The domain associated with the account.

To create a new IP range and assign an account, perform the

following:

Specify the following:

Click Add.

Dedicating VLAN Ranges to an Account

After the CloudStack Management Server is installed, log in to the

CloudStack UI as administrator.

In the left navigation bar, click Infrastructure.

In Zones, click View All.

Choose the zone you want to work with.

Click the Physical Network tab.

In the Guest node of the diagram, click Configure.

Select the Dedicated VLAN Ranges tab.

Click Dedicate VLAN Range.

The Dedicate VLAN Range dialog is displayed.

Specify the following:

- VLAN Range: The VLAN range that you want to assign to an

account.

- Account: The account to which you want to assign the

selected VLAN range.

- Domain: The domain associated with the account.

Configuring Multiple IP Addresses on a Single NIC

CloudStack provides you the ability to associate multiple private IP

addresses per guest VM NIC. In addition to the primary IP, you can

assign additional IPs to the guest VM NIC. This feature is supported on

all the network configurations: Basic, Advanced, and VPC. Security

Groups, Static NAT and Port forwarding services are supported on these

additional IPs.

As always, you can specify an IP from the guest subnet; if not

specified, an IP is automatically picked up from the guest VM subnet.

You can view the IPs associated with for each guest VM NICs on the UI.

You can apply NAT on these additional guest IPs by using network

configuration option in the CloudStack UI. You must specify the NIC to

which the IP should be associated.

This feature is supported on XenServer, KVM, and VMware hypervisors.

Note that Basic zone security groups are not supported on VMware.

Use Cases

Some of the use cases are described below:

- Network devices, such as firewalls and load balancers, generally work

best when they have access to multiple IP addresses on the network

interface.

- Moving private IP addresses between interfaces or instances.

Applications that are bound to specific IP addresses can be moved

between instances.

- Hosting multiple SSL Websites on a single instance. You can install

multiple SSL certificates on a single instance, each associated with

a distinct IP address.

Guidelines

To prevent IP conflict, configure different subnets when multiple

networks are connected to the same VM.

Assigning Additional IPs to a VM

Log in to the CloudStack UI.

In the left navigation bar, click Instances.

Click the name of the instance you want to work with.

In the Details tab, click NICs.

Click View Secondary IPs.

Click Acquire New Secondary IP, and click Yes in the confirmation

dialog.

You need to configure the IP on the guest VM NIC manually. CloudStack

will not automatically configure the acquired IP address on the VM.

Ensure that the IP address configuration persist on VM reboot.

Within a few moments, the new IP address should appear with the state

Allocated. You can now use the IP address in Port Forwarding or

StaticNAT rules.

Port Forwarding and StaticNAT Services Changes

Because multiple IPs can be associated per NIC, you are allowed to

select a desired IP for the Port Forwarding and StaticNAT services. The

default is the primary IP. To enable this functionality, an extra

optional parameter ‘vmguestip’ is added to the Port forwarding and

StaticNAT APIs (enableStaticNat, createIpForwardingRule) to indicate on

what IP address NAT need to be configured. If vmguestip is passed, NAT

is configured on the specified private IP of the VM. if not passed, NAT

is configured on the primary IP of the VM.

About Multiple IP Ranges

Note

The feature can only be implemented on IPv4 addresses.

CloudStack provides you with the flexibility to add guest IP ranges from

different subnets in Basic zones and security groups-enabled Advanced

zones. For security groups-enabled Advanced zones, it implies multiple

subnets can be added to the same VLAN. With the addition of this

feature, you will be able to add IP address ranges from the same subnet

or from a different one when IP address are exhausted. This would in

turn allows you to employ higher number of subnets and thus reduce the

address management overhead. To support this feature, the capability of

createVlanIpRange API is extended to add IP ranges also from a

different subnet.

Ensure that you manually configure the gateway of the new subnet before

adding the IP range. Note that CloudStack supports only one gateway for

a subnet; overlapping subnets are not currently supported.

Use the deleteVlanRange API to delete IP ranges. This operation

fails if an IP from the remove range is in use. If the remove range

contains the IP address on which the DHCP server is running, CloudStack

acquires a new IP from the same subnet. If no IP is available in the

subnet, the remove operation fails.

This feature is supported on KVM, xenServer, and VMware hypervisors.

About Elastic IPs

Elastic IP (EIP) addresses are the IP addresses that are associated with

an account, and act as static IP addresses. The account owner has the

complete control over the Elastic IP addresses that belong to the

account. As an account owner, you can allocate an Elastic IP to a VM of

your choice from the EIP pool of your account. Later if required you can

reassign the IP address to a different VM. This feature is extremely

helpful during VM failure. Instead of replacing the VM which is down,

the IP address can be reassigned to a new VM in your account.

Similar to the public IP address, Elastic IP addresses are mapped to

their associated private IP addresses by using StaticNAT. The EIP

service is equipped with StaticNAT (1:1) service in an EIP-enabled basic

zone. The default network offering,

DefaultSharedNetscalerEIPandELBNetworkOffering, provides your network

with EIP and ELB network services if a NetScaler device is deployed in

your zone. Consider the following illustration for more details.

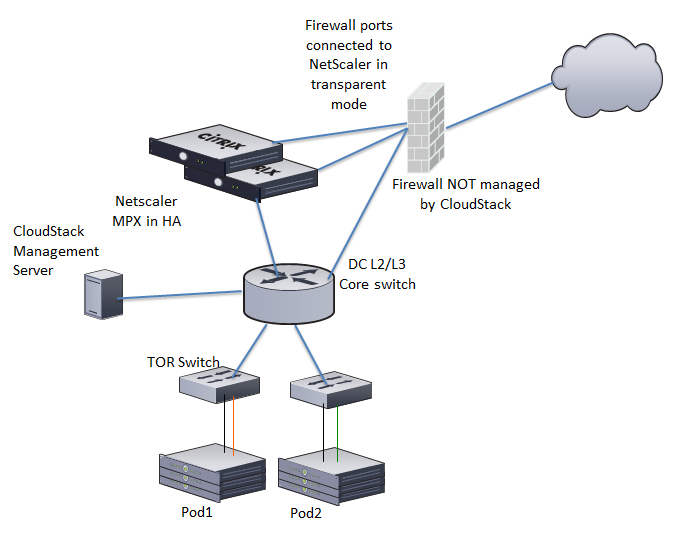

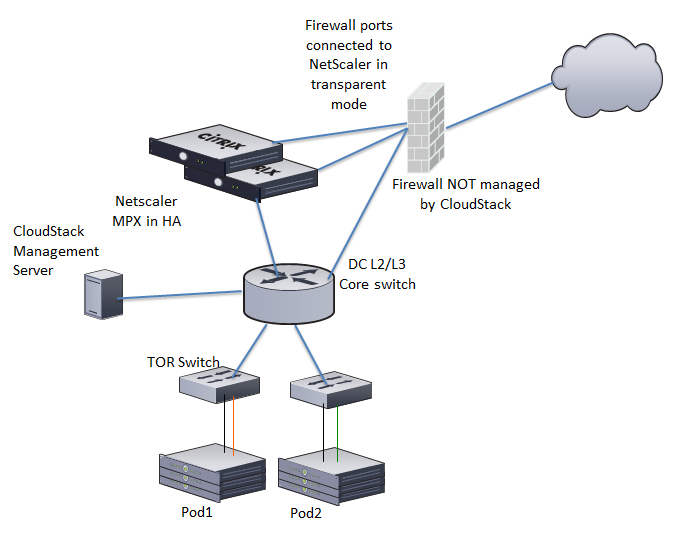

In the illustration, a NetScaler appliance is the default entry or exit

point for the CloudStack instances, and firewall is the default entry or

exit point for the rest of the data center. Netscaler provides LB

services and staticNAT service to the guest networks. The guest traffic

in the pods and the Management Server are on different subnets / VLANs.

The policy-based routing in the data center core switch sends the public

traffic through the NetScaler, whereas the rest of the data center goes

through the firewall.

The EIP work flow is as follows:

When a user VM is deployed, a public IP is automatically acquired

from the pool of public IPs configured in the zone. This IP is owned

by the VM’s account.

Each VM will have its own private IP. When the user VM starts, Static

NAT is provisioned on the NetScaler device by using the Inbound

Network Address Translation (INAT) and Reverse NAT (RNAT) rules

between the public IP and the private IP.

Note

Inbound NAT (INAT) is a type of NAT supported by NetScaler, in which

the destination IP address is replaced in the packets from the public

network, such as the Internet, with the private IP address of a VM in

the private network. Reverse NAT (RNAT) is a type of NAT supported by

NetScaler, in which the source IP address is replaced in the packets

generated by a VM in the private network with the public IP address.

This default public IP will be released in two cases:

- When the VM is stopped. When the VM starts, it again receives a

new public IP, not necessarily the same one allocated initially,

from the pool of Public IPs.

- The user acquires a public IP (Elastic IP). This public IP is

associated with the account, but will not be mapped to any private

IP. However, the user can enable Static NAT to associate this IP

to the private IP of a VM in the account. The Static NAT rule for

the public IP can be disabled at any time. When Static NAT is

disabled, a new public IP is allocated from the pool, which is not

necessarily be the same one allocated initially.

For the deployments where public IPs are limited resources, you have the

flexibility to choose not to allocate a public IP by default. You can

use the Associate Public IP option to turn on or off the automatic

public IP assignment in the EIP-enabled Basic zones. If you turn off the

automatic public IP assignment while creating a network offering, only a

private IP is assigned to a VM when the VM is deployed with that network

offering. Later, the user can acquire an IP for the VM and enable static

NAT.

For more information on the Associate Public IP option, see

“Creating a New Network Offering”.

Note

The Associate Public IP feature is designed only for use with user VMs.

The System VMs continue to get both public IP and private by default,

irrespective of the network offering configuration.

New deployments which use the default shared network offering with EIP

and ELB services to create a shared network in the Basic zone will

continue allocating public IPs to each user VM.

Portable IPs

About Portable IP

Portable IPs in CloudStack are region-level pool of IPs, which are

elastic in nature, that can be transferred across geographically

separated zones. As an administrator, you can provision a pool of

portable public IPs at region level and are available for user

consumption. The users can acquire portable IPs if admin has provisioned

portable IPs at the region level they are part of. These IPs can be use

for any service within an advanced zone. You can also use portable IPs

for EIP services in basic zones.

The salient features of Portable IP are as follows:

- IP is statically allocated

- IP need not be associated with a network

- IP association is transferable across networks

- IP is transferable across both Basic and Advanced zones

- IP is transferable across VPC, non-VPC isolated and shared networks

- Portable IP transfer is available only for static NAT.

Guidelines

Before transferring to another network, ensure that no network rules

(Firewall, Static NAT, Port Forwarding, and so on) exist on that

portable IP.

Configuring Portable IPs

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, click Regions.

Choose the Regions that you want to work with.

Click View Portable IP.

Click Portable IP Range.

The Add Portable IP Range window is displayed.

Specify the following:

- Start IP/ End IP: A range of IP addresses that are accessible

from the Internet and will be allocated to guest VMs. Enter the

first and last IP addresses that define a range that CloudStack

can assign to guest VMs.

- Gateway: The gateway in use for the Portable IP addresses you

are configuring.

- Netmask: The netmask associated with the Portable IP range.

- VLAN: The VLAN that will be used for public traffic.

Click OK.

Acquiring a Portable IP

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, choose Network.

Click the name of the network where you want to work with.

Click View IP Addresses.

Click Acquire New IP.

The Acquire New IP window is displayed.

Specify whether you want cross-zone IP or not.

Click Yes in the confirmation dialog.

Within a few moments, the new IP address should appear with the state

Allocated. You can now use the IP address in port forwarding or

static NAT rules.

Transferring Portable IP

An IP can be transferred from one network to another only if Static NAT

is enabled. However, when a portable IP is associated with a network,

you can use it for any service in the network.

To transfer a portable IP across the networks, execute the following

API:

http://localhost:8096/client/api?command=enableStaticNat&response=json&ipaddressid=a4bc37b2-4b4e-461d-9a62-b66414618e36&virtualmachineid=a242c476-ef37-441e-9c7b-b303e2a9cb4f&networkid=6e7cd8d1-d1ba-4c35-bdaf-333354cbd49810

Replace the UUID with appropriate UUID. For example, if you want to

transfer a portable IP to network X and VM Y in a network, execute the

following:

http://localhost:8096/client/api?command=enableStaticNat&response=json&ipaddressid=a4bc37b2-4b4e-461d-9a62-b66414618e36&virtualmachineid=Y&networkid=X

Multiple Subnets in Shared Network

CloudStack provides you with the flexibility to add guest IP ranges from

different subnets in Basic zones and security groups-enabled Advanced

zones. For security groups-enabled Advanced zones, it implies multiple

subnets can be added to the same VLAN. With the addition of this

feature, you will be able to add IP address ranges from the same subnet

or from a different one when IP address are exhausted. This would in

turn allows you to employ higher number of subnets and thus reduce the

address management overhead. You can delete the IP ranges you have

added.

Prerequisites and Guidelines

- This feature can only be implemented:

- on IPv4 addresses

- if virtual router is the DHCP provider

- on KVM, xenServer, and VMware hypervisors

- Manually configure the gateway of the new subnet before adding the IP

range.

- CloudStack supports only one gateway for a subnet; overlapping

subnets are not currently supported

Adding Multiple Subnets to a Shared Network

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, choose Infrastructure.

On Zones, click View More, then click the zone to which you want to

work with..

Click Physical Network.

In the Guest node of the diagram, click Configure.

Click Networks.

Select the networks you want to work with.

Click View IP Ranges.

Click Add IP Range.

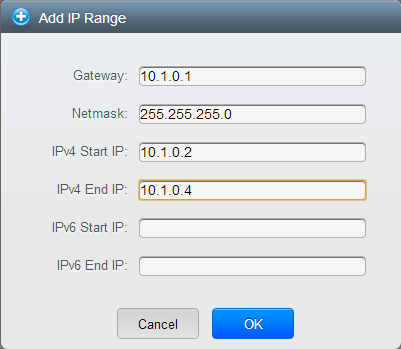

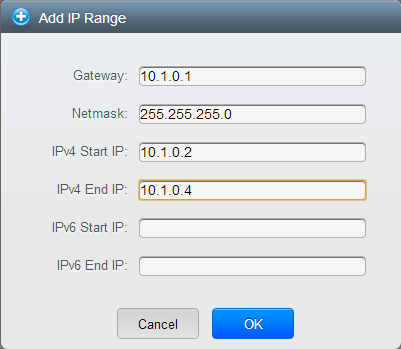

The Add IP Range dialog is displayed, as follows:

Specify the following:

All the fields are mandatory.

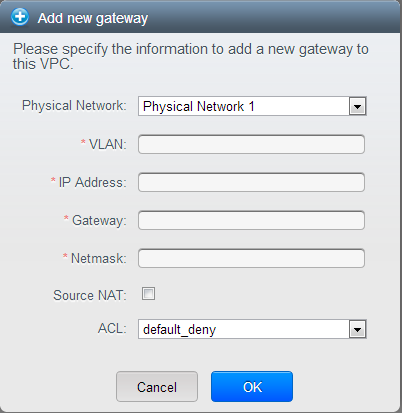

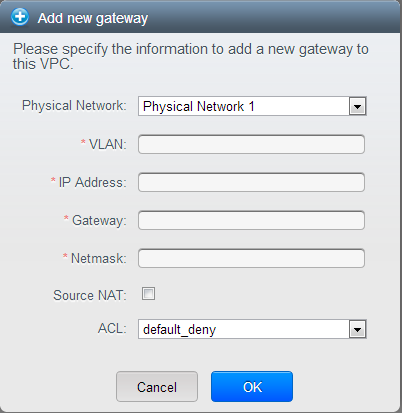

Gateway: The gateway for the tier you create. Ensure that the

gateway is within the Super CIDR range that you specified while

creating the VPC, and is not overlapped with the CIDR of any

existing tier within the VPC.

Netmask: The netmask for the tier you create.

For example, if the VPC CIDR is 10.0.0.0/16 and the network tier

CIDR is 10.0.1.0/24, the gateway of the tier is 10.0.1.1, and the

netmask of the tier is 255.255.255.0.

Start IP/ End IP: A range of IP addresses that are accessible

from the Internet and will be allocated to guest VMs. Enter the

first and last IP addresses that define a range that CloudStack

can assign to guest VMs .

Click OK.

Isolation in Advanced Zone Using Private VLAN

Isolation of guest traffic in shared networks can be achieved by using

Private VLANs (PVLAN). PVLANs provide Layer 2 isolation between ports

within the same VLAN. In a PVLAN-enabled shared network, a user VM

cannot reach other user VM though they can reach the DHCP server and

gateway, this would in turn allow users to control traffic within a

network and help them deploy multiple applications without communication

between application as well as prevent communication with other users’

VMs.

- Isolate VMs in a shared networks by using Private VLANs.

- Supported on KVM, XenServer, and VMware hypervisors

- PVLAN-enabled shared network can be a part of multiple networks of a

guest VM.

About Private VLAN

In an Ethernet switch, a VLAN is a broadcast domain where hosts can

establish direct communication with each another at Layer 2. Private

VLAN is designed as an extension of VLAN standard to add further

segmentation of the logical broadcast domain. A regular VLAN is a single

broadcast domain, whereas a private VLAN partitions a larger VLAN

broadcast domain into smaller sub-domains. A sub-domain is represented

by a pair of VLANs: a Primary VLAN and a Secondary VLAN. The original

VLAN that is being divided into smaller groups is called Primary, which

implies that all VLAN pairs in a private VLAN share the same Primary

VLAN. All the secondary VLANs exist only inside the Primary. Each

Secondary VLAN has a specific VLAN ID associated to it, which

differentiates one sub-domain from another.

Three types of ports exist in a private VLAN domain, which essentially

determine the behaviour of the participating hosts. Each ports will have

its own unique set of rules, which regulate a connected host’s ability

to communicate with other connected host within the same private VLAN

domain. Configure each host that is part of a PVLAN pair can be by using

one of these three port designation:

- Promiscuous: A promiscuous port can communicate with all the

interfaces, including the community and isolated host ports that

belong to the secondary VLANs. In Promiscuous mode, hosts are

connected to promiscuous ports and are able to communicate directly

with resources on both primary and secondary VLAN. Routers, DHCP

servers, and other trusted devices are typically attached to

promiscuous ports.

- Isolated VLANs: The ports within an isolated VLAN cannot

communicate with each other at the layer-2 level. The hosts that are

connected to Isolated ports can directly communicate only with the

Promiscuous resources. If your customer device needs to have access

only to a gateway router, attach it to an isolated port.

- Community VLANs: The ports within a community VLAN can

communicate with each other and with the promiscuous ports, but they

cannot communicate with the ports in other communities at the layer-2

level. In a Community mode, direct communication is permitted only

with the hosts in the same community and those that are connected to

the Primary PVLAN in promiscuous mode. If your customer has two

devices that need to be isolated from other customers’ devices, but

to be able to communicate among themselves, deploy them in community

ports.

For further reading:

Prerequisites

Use a PVLAN supported switch.

See Private VLAN Catalyst Switch Support

Matrix for

more information.

All the layer 2 switches, which are PVLAN-aware, are connected to

each other, and one of them is connected to a router. All the ports

connected to the host would be configured in trunk mode. Open

Management VLAN, Primary VLAN (public) and Secondary Isolated VLAN

ports. Configure the switch port connected to the router in PVLAN

promiscuous trunk mode, which would translate an isolated VLAN to

primary VLAN for the PVLAN-unaware router.

Note that only Cisco Catalyst 4500 has the PVLAN promiscuous trunk

mode to connect both normal VLAN and PVLAN to a PVLAN-unaware switch.

For the other Catalyst PVLAN support switch, connect the switch to

upper switch by using cables, one each for a PVLAN pair.

Configure private VLAN on your physical switches out-of-band.

Before you use PVLAN on XenServer and KVM, enable Open vSwitch (OVS).

Note

OVS on XenServer and KVM does not support PVLAN natively. Therefore,

CloudStack managed to simulate PVLAN on OVS for XenServer and KVM by

modifying the flow table.

Creating a PVLAN-Enabled Guest Network

Log in to the CloudStack UI as administrator.

In the left navigation, choose Infrastructure.

On Zones, click View More.

Click the zone to which you want to add a guest network.

Click the Physical Network tab.

Click the physical network you want to work with.

On the Guest node of the diagram, click Configure.

Click the Network tab.

Click Add guest network.

The Add guest network window is displayed.

Specify the following:

Name: The name of the network. This will be visible to the

user.

Description: The short description of the network that can be

displayed to users.

VLAN ID: The unique ID of the VLAN.

Secondary Isolated VLAN ID: The unique ID of the Secondary

Isolated VLAN.

For the description on Secondary Isolated VLAN, see

About Private VLAN”.

Scope: The available scopes are Domain, Account, Project, and

All.

- Domain: Selecting Domain limits the scope of this guest

network to the domain you specify. The network will not be

available for other domains. If you select Subdomain Access,

the guest network is available to all the sub domains within

the selected domain.

- Account: The account for which the guest network is being

created for. You must specify the domain the account belongs

to.

- Project: The project for which the guest network is being

created for. You must specify the domain the project belongs

to.

- All: The guest network is available for all the domains,

account, projects within the selected zone.

Network Offering: If the administrator has configured multiple

network offerings, select the one you want to use for this

network.

Gateway: The gateway that the guests should use.

Netmask: The netmask in use on the subnet the guests will use.

IP Range: A range of IP addresses that are accessible from the

Internet and are assigned to the guest VMs.

Network Domain: A custom DNS suffix at the level of a network.

If you want to assign a special domain name to the guest VM

network, specify a DNS suffix.

Click OK to confirm.

Security Groups

About Security Groups

Security groups provide a way to isolate traffic to VMs. A security

group is a group of VMs that filter their incoming and outgoing traffic

according to a set of rules, called ingress and egress rules. These

rules filter network traffic according to the IP address that is

attempting to communicate with the VM. Security groups are particularly

useful in zones that use basic networking, because there is a single

guest network for all guest VMs. In advanced zones, security groups are

supported only on the KVM hypervisor.

Note

In a zone that uses advanced networking, you can instead define

multiple guest networks to isolate traffic to VMs.

Each CloudStack account comes with a default security group that denies

all inbound traffic and allows all outbound traffic. The default

security group can be modified so that all new VMs inherit some other

desired set of rules.

Any CloudStack user can set up any number of additional security groups.

When a new VM is launched, it is assigned to the default security group

unless another user-defined security group is specified. A VM can be a

member of any number of security groups. Once a VM is assigned to a

security group, it remains in that group for its entire lifetime; you

can not move a running VM from one security group to another.

You can modify a security group by deleting or adding any number of

ingress and egress rules. When you do, the new rules apply to all VMs in

the group, whether running or stopped.

If no ingress rules are specified, then no traffic will be allowed in,

except for responses to any traffic that has been allowed out through an

egress rule.

Adding a Security Group

A user or administrator can define a new security group.

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, choose Network.

In Select view, choose Security Groups.

Click Add Security Group.

Provide a name and description.

Click OK.

The new security group appears in the Security Groups Details tab.

To make the security group useful, continue to Adding Ingress and

Egress Rules to a Security Group.

Security Groups in Advanced Zones (KVM Only)

CloudStack provides the ability to use security groups to provide

isolation between guests on a single shared, zone-wide network in an

advanced zone where KVM is the hypervisor. Using security groups in

advanced zones rather than multiple VLANs allows a greater range of

options for setting up guest isolation in a cloud.

Limitations

The following are not supported for this feature:

- Two IP ranges with the same VLAN and different gateway or netmask in

security group-enabled shared network.

- Two IP ranges with the same VLAN and different gateway or netmask in

account-specific shared networks.

- Multiple VLAN ranges in security group-enabled shared network.

- Multiple VLAN ranges in account-specific shared networks.

Security groups must be enabled in the zone in order for this feature to

be used.

Enabling Security Groups

In order for security groups to function in a zone, the security groups

feature must first be enabled for the zone. The administrator can do

this when creating a new zone, by selecting a network offering that

includes security groups. The procedure is described in Basic Zone

Configuration in the Advanced Installation Guide. The administrator can

not enable security groups for an existing zone, only when creating a

new zone.

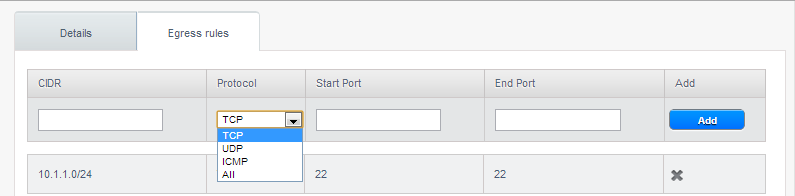

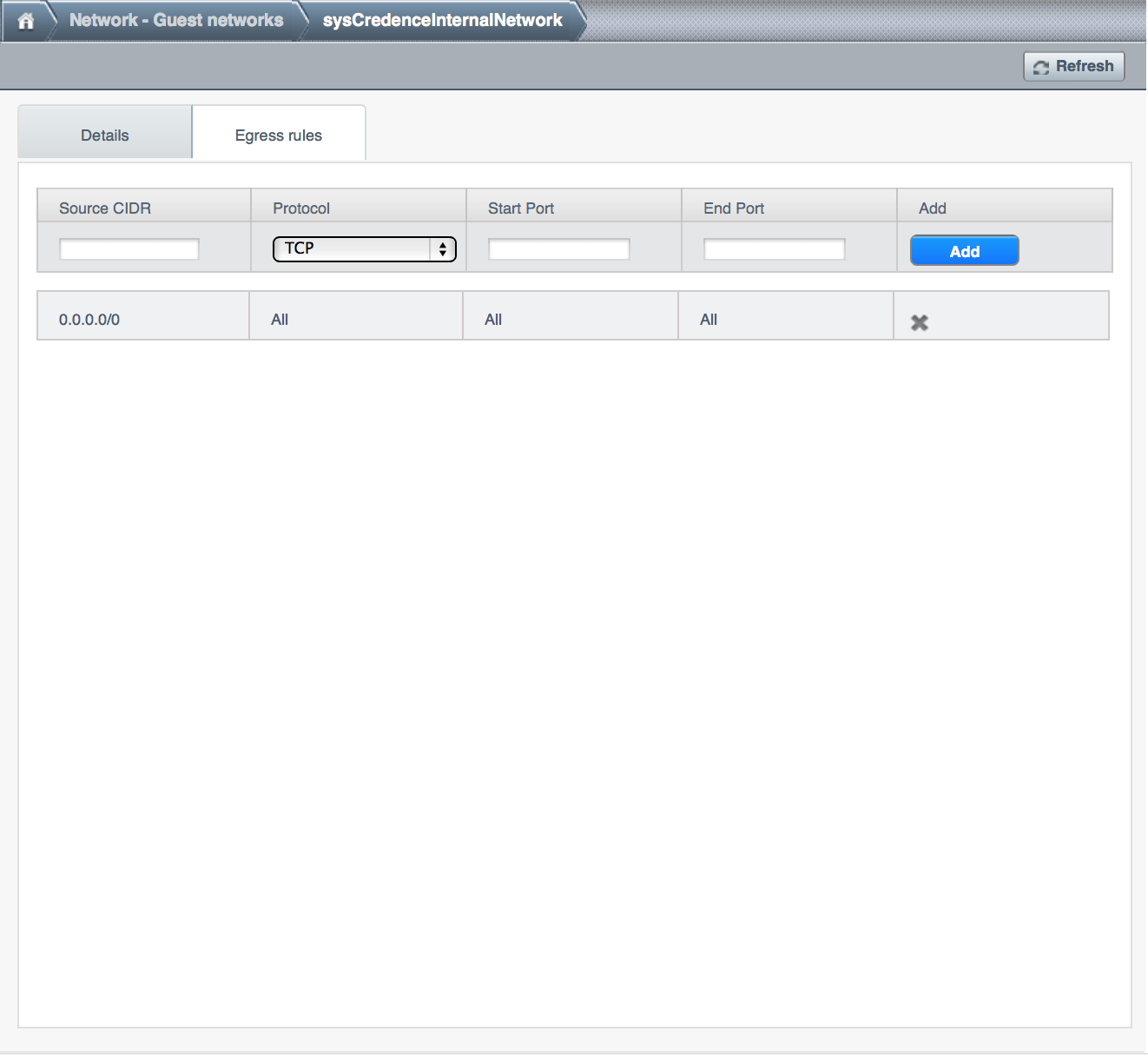

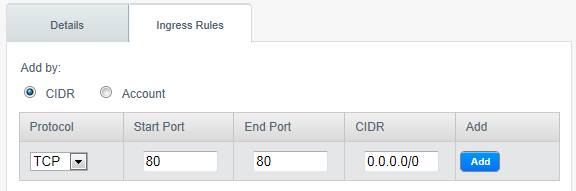

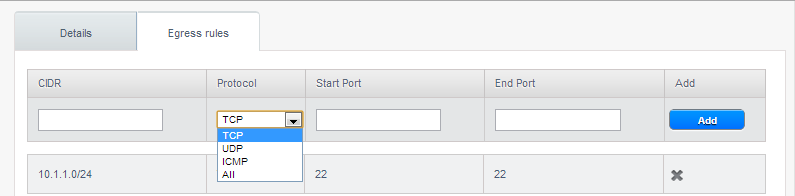

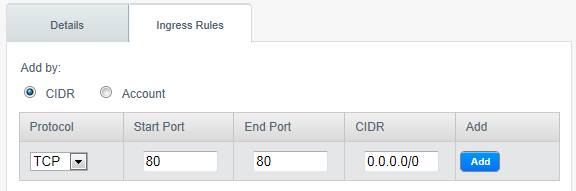

Adding Ingress and Egress Rules to a Security Group

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, choose Network

In Select view, choose Security Groups, then click the security group

you want.

To add an ingress rule, click the Ingress Rules tab and fill out the

following fields to specify what network traffic is allowed into VM

instances in this security group. If no ingress rules are specified,

then no traffic will be allowed in, except for responses to any

traffic that has been allowed out through an egress rule.

- Add by CIDR/Account. Indicate whether the source of the

traffic will be defined by IP address (CIDR) or an existing

security group in a CloudStack account (Account). Choose Account

if you want to allow incoming traffic from all VMs in another

security group

- Protocol. The networking protocol that sources will use to

send traffic to the security group. TCP and UDP are typically used

for data exchange and end-user communications. ICMP is typically

used to send error messages or network monitoring data.

- Start Port, End Port. (TCP, UDP only) A range of listening

ports that are the destination for the incoming traffic. If you

are opening a single port, use the same number in both fields.

- ICMP Type, ICMP Code. (ICMP only) The type of message and

error code that will be accepted.

- CIDR. (Add by CIDR only) To accept only traffic from IP

addresses within a particular address block, enter a CIDR or a

comma-separated list of CIDRs. The CIDR is the base IP address of

the incoming traffic. For example, 192.168.0.0/22. To allow all

CIDRs, set to 0.0.0.0/0.

- Account, Security Group. (Add by Account only) To accept only

traffic from another security group, enter the CloudStack account

and name of a security group that has already been defined in that

account. To allow traffic between VMs within the security group

you are editing now, enter the same name you used in step 7.

The following example allows inbound HTTP access from anywhere:

To add an egress rule, click the Egress Rules tab and fill out the

following fields to specify what type of traffic is allowed to be

sent out of VM instances in this security group. If no egress rules

are specified, then all traffic will be allowed out. Once egress

rules are specified, the following types of traffic are allowed out:

traffic specified in egress rules; queries to DNS and DHCP servers;

and responses to any traffic that has been allowed in through an

ingress rule

- Add by CIDR/Account. Indicate whether the destination of the

traffic will be defined by IP address (CIDR) or an existing

security group in a CloudStack account (Account). Choose Account

if you want to allow outgoing traffic to all VMs in another

security group.

- Protocol. The networking protocol that VMs will use to send

outgoing traffic. TCP and UDP are typically used for data exchange

and end-user communications. ICMP is typically used to send error

messages or network monitoring data.

- Start Port, End Port. (TCP, UDP only) A range of listening

ports that are the destination for the outgoing traffic. If you

are opening a single port, use the same number in both fields.

- ICMP Type, ICMP Code. (ICMP only) The type of message and

error code that will be sent

- CIDR. (Add by CIDR only) To send traffic only to IP addresses

within a particular address block, enter a CIDR or a

comma-separated list of CIDRs. The CIDR is the base IP address of

the destination. For example, 192.168.0.0/22. To allow all CIDRs,

set to 0.0.0.0/0.

- Account, Security Group. (Add by Account only) To allow

traffic to be sent to another security group, enter the CloudStack

account and name of a security group that has already been defined

in that account. To allow traffic between VMs within the security

group you are editing now, enter its name.

Click Add.

External Firewalls and Load Balancers

CloudStack is capable of replacing its Virtual Router with an external

Juniper SRX device and an optional external NetScaler or F5 load

balancer for gateway and load balancing services. In this case, the VMs

use the SRX as their gateway.

About Using a NetScaler Load Balancer

Citrix NetScaler is supported as an external network element for load

balancing in zones that use isolated networking in advanced zones. Set

up an external load balancer when you want to provide load balancing

through means other than CloudStack’s provided virtual router.

Note

In a Basic zone, load balancing service is supported only if

Elastic IP or Elastic LB services are enabled.

When NetScaler load balancer is used to provide EIP or ELB services in a

Basic zone, ensure that all guest VM traffic must enter and exit through

the NetScaler device. When inbound traffic goes through the NetScaler

device, traffic is routed by using the NAT protocol depending on the

EIP/ELB configured on the public IP to the private IP. The traffic that

is originated from the guest VMs usually goes through the layer 3

router. To ensure that outbound traffic goes through NetScaler device

providing EIP/ELB, layer 3 router must have a policy-based routing. A

policy-based route must be set up so that all traffic originated from

the guest VM’s are directed to NetScaler device. This is required to

ensure that the outbound traffic from the guest VM’s is routed to a

public IP by using NAT.For more information on Elastic IP, see

“About Elastic IP”.

The NetScaler can be set up in direct (outside the firewall) mode. It

must be added before any load balancing rules are deployed on guest VMs

in the zone.

The functional behavior of the NetScaler with CloudStack is the same as

described in the CloudStack documentation for using an F5 external load

balancer. The only exception is that the F5 supports routing domains,

and NetScaler does not. NetScaler can not yet be used as a firewall.

To install and enable an external load balancer for CloudStack

management, see External Guest Load Balancer Integration in the

Installation Guide.

The Citrix NetScaler comes in three varieties. The following

summarizes how these variants are treated in CloudStack.

MPX

- Physical appliance. Capable of deep packet inspection. Can act as

application firewall and load balancer

- In advanced zones, load balancer functionality fully supported without

limitation. In basic zones, static NAT, elastic IP (EIP), and elastic

load balancing (ELB) are also provided.

VPX

- Virtual appliance. Can run as VM on XenServer, ESXi, and Hyper-V

hypervisors. Same functionality as MPX

- Supported on ESXi and XenServer. Same functional support as for MPX.

CloudStack will treat VPX and MPX as the same device type.

SDX

- Physical appliance. Can create multiple fully isolated VPX instances on

a single appliance to support multi-tenant usage

- CloudStack will dynamically provision, configure, and manage the life

cycle of VPX instances on the SDX. Provisioned instances are added into

CloudStack automatically - no manual configuration by the administrator

is required. Once a VPX instance is added into CloudStack, it is treated

the same as a VPX on an ESXi host.

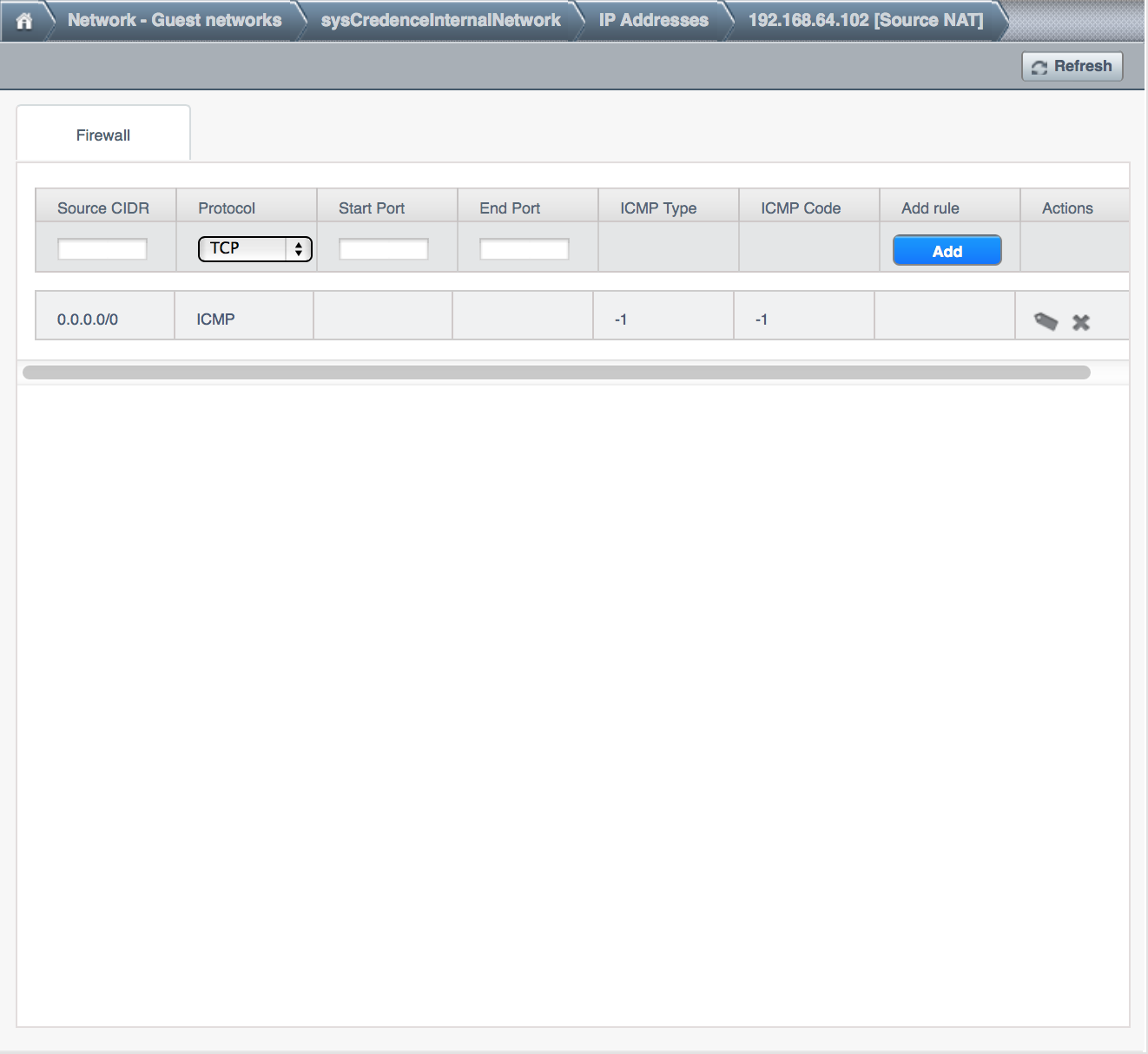

Initial Setup of External Firewalls and Load Balancers

When the first VM is created for a new account, CloudStack programs the

external firewall and load balancer to work with the VM. The following

objects are created on the firewall:

- A new logical interface to connect to the account’s private VLAN. The

interface IP is always the first IP of the account’s private subnet

(e.g. 10.1.1.1).

- A source NAT rule that forwards all outgoing traffic from the

account’s private VLAN to the public Internet, using the account’s

public IP address as the source address

- A firewall filter counter that measures the number of bytes of

outgoing traffic for the account

The following objects are created on the load balancer:

- A new VLAN that matches the account’s provisioned Zone VLAN

- A self IP for the VLAN. This is always the second IP of the account’s

private subnet (e.g. 10.1.1.2).

Ongoing Configuration of External Firewalls and Load Balancers

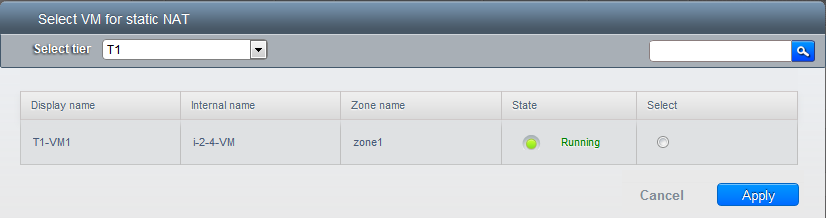

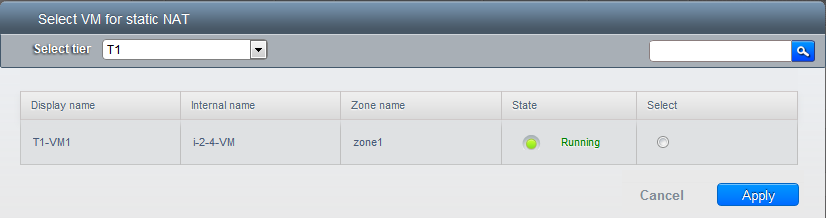

Additional user actions (e.g. setting a port forward) will cause further

programming of the firewall and load balancer. A user may request

additional public IP addresses and forward traffic received at these IPs

to specific VMs. This is accomplished by enabling static NAT for a

public IP address, assigning the IP to a VM, and specifying a set of

protocols and port ranges to open. When a static NAT rule is created,

CloudStack programs the zone’s external firewall with the following

objects:

- A static NAT rule that maps the public IP address to the private IP

address of a VM.

- A security policy that allows traffic within the set of protocols and

port ranges that are specified.

- A firewall filter counter that measures the number of bytes of

incoming traffic to the public IP.

The number of incoming and outgoing bytes through source NAT, static

NAT, and load balancing rules is measured and saved on each external

element. This data is collected on a regular basis and stored in the

CloudStack database.

Load Balancer Rules

A CloudStack user or administrator may create load balancing rules that

balance traffic received at a public IP to one or more VMs. A user

creates a rule, specifies an algorithm, and assigns the rule to a set of

VMs.

Note

If you create load balancing rules while using a network service

offering that includes an external load balancer device such as

NetScaler, and later change the network service offering to one that

uses the CloudStack virtual router, you must create a firewall rule on

the virtual router for each of your existing load balancing rules so

that they continue to function.

Adding a Load Balancer Rule

Log in to the CloudStack UI as an administrator or end user.

In the left navigation, choose Network.

Click the name of the network where you want to load balance the

traffic.

Click View IP Addresses.

Click the IP address for which you want to create the rule, then

click the Configuration tab.

In the Load Balancing node of the diagram, click View All.

In a Basic zone, you can also create a load balancing rule without

acquiring or selecting an IP address. CloudStack internally assign an

IP when you create the load balancing rule, which is listed in the IP

Addresses page when the rule is created.

To do that, select the name of the network, then click Add Load

Balancer tab. Continue with #7.

Fill in the following:

- Name: A name for the load balancer rule.

- Public Port: The port receiving incoming traffic to be

balanced.

- Private Port: The port that the VMs will use to receive the

traffic.

- Algorithm: Choose the load balancing algorithm you want

CloudStack to use. CloudStack supports a variety of well-known

algorithms. If you are not familiar with these choices, you will

find plenty of information about them on the Internet.

- Stickiness: (Optional) Click Configure and choose the

algorithm for the stickiness policy. See Sticky Session Policies

for Load Balancer Rules.

- AutoScale: Click Configure and complete the AutoScale

configuration as explained in Configuring AutoScale.

- Health Check: (Optional; NetScaler load balancers only) Click

Configure and fill in the characteristics of the health check

policy. See Health Checks for Load Balancer Rules.

- Ping path (Optional): Sequence of destinations to which to

send health check queries. Default: / (all).

- Response time (Optional): How long to wait for a response

from the health check (2 - 60 seconds). Default: 5 seconds.

- Interval time (Optional): Amount of time between health

checks (1 second - 5 minutes). Default value is set in the

global configuration parameter lbrule_health

check_time_interval.

- Healthy threshold (Optional): Number of consecutive health

check successes that are required before declaring an instance

healthy. Default: 2.

- Unhealthy threshold (Optional): Number of consecutive

health check failures that are required before declaring an

instance unhealthy. Default: 10.

Click Add VMs, then select two or more VMs that will divide the load

of incoming traffic, and click Apply.

The new load balancer rule appears in the list. You can repeat these

steps to add more load balancer rules for this IP address.

Sticky Session Policies for Load Balancer Rules

Sticky sessions are used in Web-based applications to ensure continued

availability of information across the multiple requests in a user’s

session. For example, if a shopper is filling a cart, you need to

remember what has been purchased so far. The concept of “stickiness” is

also referred to as persistence or maintaining state.

Any load balancer rule defined in CloudStack can have a stickiness

policy. The policy consists of a name, stickiness method, and

parameters. The parameters are name-value pairs or flags, which are

defined by the load balancer vendor. The stickiness method could be load

balancer-generated cookie, application-generated cookie, or

source-based. In the source-based method, the source IP address is used

to identify the user and locate the user’s stored data. In the other

methods, cookies are used. The cookie generated by the load balancer or

application is included in request and response URLs to create

persistence. The cookie name can be specified by the administrator or

automatically generated. A variety of options are provided to control

the exact behavior of cookies, such as how they are generated and

whether they are cached.

For the most up to date list of available stickiness methods, see the

CloudStack UI or call listNetworks and check the

SupportedStickinessMethods capability.

Health Checks for Load Balancer Rules

(NetScaler load balancer only; requires NetScaler version 10.0)

Health checks are used in load-balanced applications to ensure that

requests are forwarded only to running, available services. When

creating a load balancer rule, you can specify a health check policy.

This is in addition to specifying the stickiness policy, algorithm, and

other load balancer rule options. You can configure one health check

policy per load balancer rule.

Any load balancer rule defined on a NetScaler load balancer in

CloudStack can have a health check policy. The policy consists of a ping

path, thresholds to define “healthy” and “unhealthy” states, health

check frequency, and timeout wait interval.

When a health check policy is in effect, the load balancer will stop

forwarding requests to any resources that are found to be unhealthy. If

the resource later becomes available again, the periodic health check

will discover it, and the resource will once again be added to the pool

of resources that can receive requests from the load balancer. At any

given time, the most recent result of the health check is displayed in

the UI. For any VM that is attached to a load balancer rule with a

health check configured, the state will be shown as UP or DOWN in the UI

depending on the result of the most recent health check.

You can delete or modify existing health check policies.

To configure how often the health check is performed by default, use the

global configuration setting healthcheck.update.interval (default value

is 600 seconds). You can override this value for an individual health

check policy.

For details on how to set a health check policy using the UI, see

Adding a Load Balancer Rule.

Configuring AutoScale

AutoScaling allows you to scale your back-end services or application

VMs up or down seamlessly and automatically according to the conditions

you define. With AutoScaling enabled, you can ensure that the number of

VMs you are using seamlessly scale up when demand increases, and

automatically decreases when demand subsides. Thus it helps you save

compute costs by terminating underused VMs automatically and launching

new VMs when you need them, without the need for manual intervention.

NetScaler AutoScaling is designed to seamlessly launch or terminate VMs

based on user-defined conditions. Conditions for triggering a scaleup or

scaledown action can vary from a simple use case like monitoring the CPU

usage of a server to a complex use case of monitoring a combination of

server’s responsiveness and its CPU usage. For example, you can

configure AutoScaling to launch an additional VM whenever CPU usage

exceeds 80 percent for 15 minutes, or to remove a VM whenever CPU usage

is less than 20 percent for 30 minutes.

CloudStack uses the NetScaler load balancer to monitor all aspects of a

system’s health and work in unison with CloudStack to initiate scale-up

or scale-down actions.

Note

AutoScale is supported on NetScaler Release 10 Build 74.4006.e and beyond.

Prerequisites

Before you configure an AutoScale rule, consider the following:

Ensure that the necessary template is prepared before configuring

AutoScale. When a VM is deployed by using a template and when it

comes up, the application should be up and running.

Note

If the application is not running, the NetScaler device considers the

VM as ineffective and continues provisioning the VMs unconditionally

until the resource limit is exhausted.

Deploy the templates you prepared. Ensure that the applications come

up on the first boot and is ready to take the traffic. Observe the

time requires to deploy the template. Consider this time when you

specify the quiet time while configuring AutoScale.

The AutoScale feature supports the SNMP counters that can be used to

define conditions for taking scale up or scale down actions. To

monitor the SNMP-based counter, ensure that the SNMP agent is

installed in the template used for creating the AutoScale VMs, and

the SNMP operations work with the configured SNMP community and port

by using standard SNMP managers. For example, see

“Configuring SNMP Community String on a RHELServer”

to configure SNMP on a RHEL machine.

Ensure that the endpointe.url parameter present in the Global

Settings is set to the Management Server API URL. For example,

http://10.102.102.22:8080/client/api. In a multi-node Management

Server deployment, use the virtual IP address configured in the load

balancer for the management server’s cluster. Additionally, ensure

that the NetScaler device has access to this IP address to provide

AutoScale support.

If you update the endpointe.url, disable the AutoScale functionality

of the load balancer rules in the system, then enable them back to

reflect the changes. For more information see Updating an AutoScale Configuration.

If the API Key and Secret Key are regenerated for an AutoScale user,

ensure that the AutoScale functionality of the load balancers that

the user participates in are disabled and then enabled to reflect the

configuration changes in the NetScaler.

In an advanced Zone, ensure that at least one VM should be present

before configuring a load balancer rule with AutoScale. Having one VM

in the network ensures that the network is in implemented state for

configuring AutoScale.

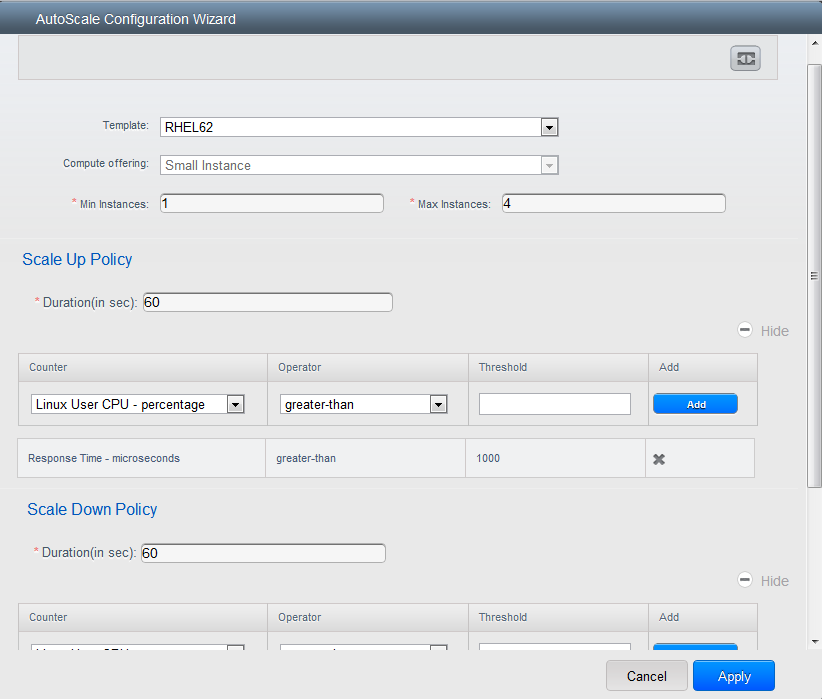

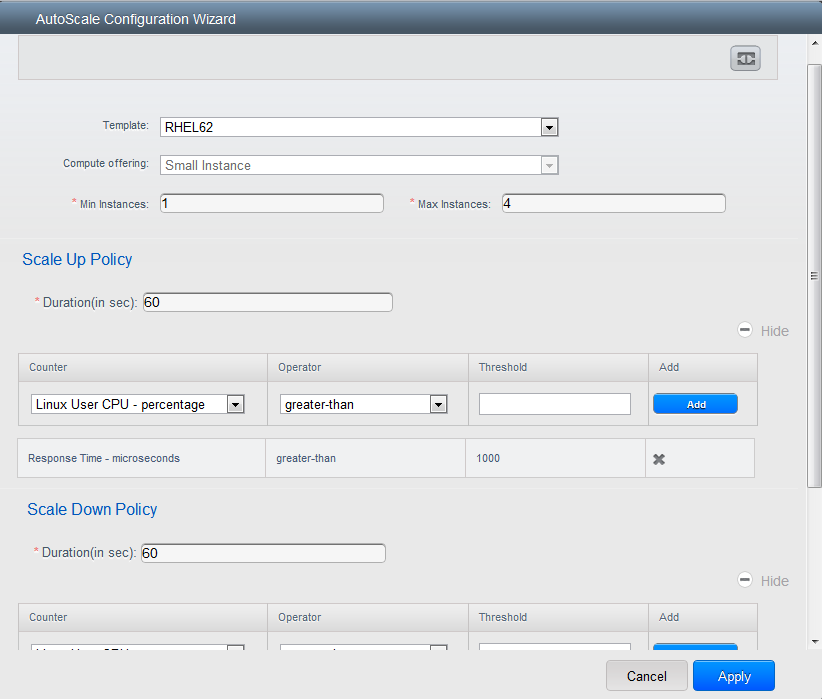

Configuration

Specify the following:

Template: A template consists of a base OS image and application.

A template is used to provision the new instance of an application on

a scaleup action. When a VM is deployed from a template, the VM can

start taking the traffic from the load balancer without any admin

intervention. For example, if the VM is deployed for a Web service,

it should have the Web server running, the database connected, and so

on.

Compute offering: A predefined set of virtual hardware

attributes, including CPU speed, number of CPUs, and RAM size, that

the user can select when creating a new virtual machine instance.

Choose one of the compute offerings to be used while provisioning a

VM instance as part of scaleup action.

Min Instance: The minimum number of active VM instances that is

assigned to a load balancing rule. The active VM instances are the

application instances that are up and serving the traffic, and are

being load balanced. This parameter ensures that a load balancing

rule has at least the configured number of active VM instances are

available to serve the traffic.

Note

If an application, such as SAP, running on a VM instance is down for

some reason, the VM is then not counted as part of Min Instance

parameter, and the AutoScale feature initiates a scaleup action if

the number of active VM instances is below the configured value.

Similarly, when an application instance comes up from its earlier

down state, this application instance is counted as part of the

active instance count and the AutoScale process initiates a scaledown

action when the active instance count breaches the Max instance

value.

Max Instance: Maximum number of active VM instances that should

be assigned toa load balancing rule. This parameter defines the

upper limit of active VM instances that can be assigned to a load

balancing rule.

Specifying a large value for the maximum instance parameter might

result in provisioning large number of VM instances, which in turn

leads to a single load balancing rule exhausting the VM instances

limit specified at the account or domain level.

Note

If an application, such as SAP, running on a VM instance is down for

some reason, the VM is not counted as part of Max Instance parameter.

So there may be scenarios where the number of VMs provisioned for a

scaleup action might be more than the configured Max Instance value.

Once the application instances in the VMs are up from an earlier down

state, the AutoScale feature starts aligning to the configured Max

Instance value.

Specify the following scale-up and scale-down policies:

- Duration: The duration, in seconds, for which the conditions you

specify must be true to trigger a scaleup action. The conditions

defined should hold true for the entire duration you specify for an

AutoScale action to be invoked.

- Counter: The performance counters expose the state of the

monitored instances. By default, CloudStack offers four performance

counters: Three SNMP counters and one NetScaler counter. The SNMP

counters are Linux User CPU, Linux System CPU, and Linux CPU Idle.

The NetScaler counter is ResponseTime. The root administrator can add

additional counters into CloudStack by using the CloudStack API.

- Operator: The following five relational operators are supported

in AutoScale feature: Greater than, Less than, Less than or equal to,

Greater than or equal to, and Equal to.

- Threshold: Threshold value to be used for the counter. Once the

counter defined above breaches the threshold value, the AutoScale

feature initiates a scaleup or scaledown action.

- Add: Click Add to add the condition.

Additionally, if you want to configure the advanced settings, click Show

advanced settings, and specify the following:

- Polling interval: Frequency in which the conditions, combination

of counter, operator and threshold, are to be evaluated before taking

a scale up or down action. The default polling interval is 30

seconds.

- Quiet Time: This is the cool down period after an AutoScale

action is initiated. The time includes the time taken to complete

provisioning a VM instance from its template and the time taken by an

application to be ready to serve traffic. This quiet time allows the

fleet to come up to a stable state before any action can take place.

The default is 300 seconds.

- Destroy VM Grace Period: The duration in seconds, after a

scaledown action is initiated, to wait before the VM is destroyed as

part of scaledown action. This is to ensure graceful close of any

pending sessions or transactions being served by the VM marked for

destroy. The default is 120 seconds.

- Security Groups: Security groups provide a way to isolate traffic

to the VM instances. A security group is a group of VMs that filter

their incoming and outgoing traffic according to a set of rules,

called ingress and egress rules. These rules filter network traffic

according to the IP address that is attempting to communicate with

the VM.

- Disk Offerings: A predefined set of disk size for primary data

storage.

- SNMP Community: The SNMP community string to be used by the

NetScaler device to query the configured counter value from the

provisioned VM instances. Default is public.

- SNMP Port: The port number on which the SNMP agent that run on

the provisioned VMs is listening. Default port is 161.

- User: This is the user that the NetScaler device use to invoke

scaleup and scaledown API calls to the cloud. If no option is

specified, the user who configures AutoScaling is applied. Specify

another user name to override.

- Apply: Click Apply to create the AutoScale configuration.

Disabling and Enabling an AutoScale Configuration

If you want to perform any maintenance operation on the AutoScale VM

instances, disable the AutoScale configuration. When the AutoScale

configuration is disabled, no scaleup or scaledown action is performed.

You can use this downtime for the maintenance activities. To disable the

AutoScale configuration, click the Disable AutoScale  button.

button.

The button toggles between enable and disable, depending on whether

AutoScale is currently enabled or not. After the maintenance operations

are done, you can enable the AutoScale configuration back. To enable,

open the AutoScale configuration page again, then click the Enable

AutoScale  button.

button.

Updating an AutoScale Configuration

You can update the various parameters and add or delete the conditions

in a scaleup or scaledown rule. Before you update an AutoScale

configuration, ensure that you disable the AutoScale load balancer rule

by clicking the Disable AutoScale button.

After you modify the required AutoScale parameters, click Apply. To

apply the new AutoScale policies, open the AutoScale configuration page

again, then click the Enable AutoScale button.

Runtime Considerations

- An administrator should not assign a VM to a load balancing rule

which is configured for AutoScale.

- Before a VM provisioning is completed if NetScaler is shutdown or

restarted, the provisioned VM cannot be a part of the load balancing

rule though the intent was to assign it to a load balancing rule. To

workaround, rename the AutoScale provisioned VMs based on the rule

name or ID so at any point of time the VMs can be reconciled to its

load balancing rule.

- Making API calls outside the context of AutoScale, such as destroyVM,

on an autoscaled VM leaves the load balancing configuration in an

inconsistent state. Though VM is destroyed from the load balancer

rule, NetScaler continues to show the VM as a service assigned to a

rule.

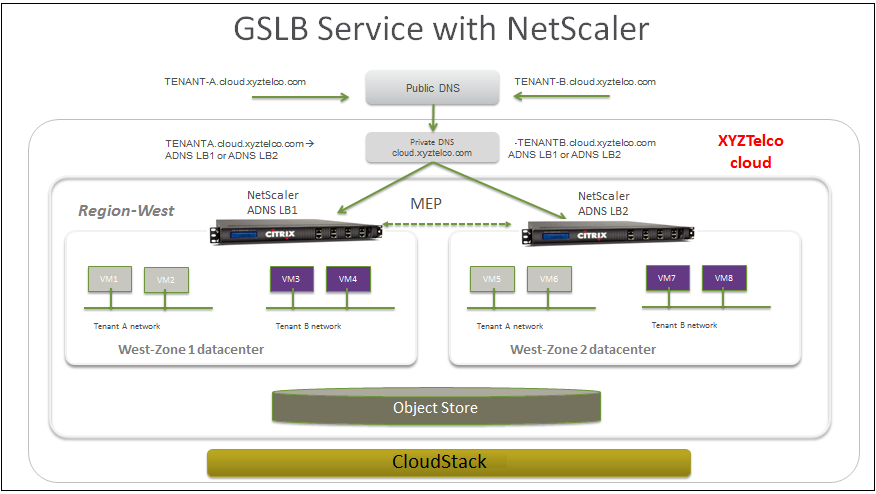

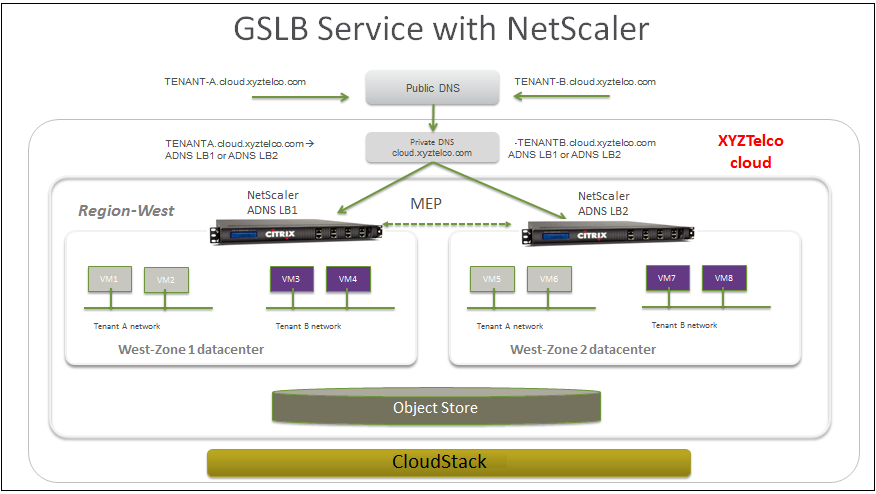

Global Server Load Balancing Support

CloudStack supports Global Server Load Balancing (GSLB) functionalities

to provide business continuity, and enable seamless resource movement

within a CloudStack environment. CloudStack achieve this by extending

its functionality of integrating with NetScaler Application Delivery

Controller (ADC), which also provides various GSLB capabilities, such as

disaster recovery and load balancing. The DNS redirection technique is

used to achieve GSLB in CloudStack.

In order to support this functionality, region level services and

service provider are introduced. A new service ‘GSLB’ is introduced as a

region level service. The GSLB service provider is introduced that will

provider the GSLB service. Currently, NetScaler is the supported GSLB

provider in CloudStack. GSLB functionality works in an Active-Active

data center environment.

About Global Server Load Balancing

Global Server Load Balancing (GSLB) is an extension of load balancing

functionality, which is highly efficient in avoiding downtime. Based on

the nature of deployment, GSLB represents a set of technologies that is

used for various purposes, such as load sharing, disaster recovery,

performance, and legal obligations. With GSLB, workloads can be

distributed across multiple data centers situated at geographically

separated locations. GSLB can also provide an alternate location for

accessing a resource in the event of a failure, or to provide a means of

shifting traffic easily to simplify maintenance, or both.

Components of GSLB

A typical GSLB environment is comprised of the following components:

- GSLB Site: In CloudStack terminology, GSLB sites are represented

by zones that are mapped to data centers, each of which has various

network appliances. Each GSLB site is managed by a NetScaler

appliance that is local to that site. Each of these appliances treats

its own site as the local site and all other sites, managed by other

appliances, as remote sites. It is the central entity in a GSLB

deployment, and is represented by a name and an IP address.

- GSLB Services: A GSLB service is typically represented by a load

balancing or content switching virtual server. In a GSLB environment,

you can have a local as well as remote GSLB services. A local GSLB

service represents a local load balancing or content switching

virtual server. A remote GSLB service is the one configured at one of

the other sites in the GSLB setup. At each site in the GSLB setup,

you can create one local GSLB service and any number of remote GSLB

services.

- GSLB Virtual Servers: A GSLB virtual server refers to one or more

GSLB services and balances traffic between traffic across the VMs in

multiple zones by using the CloudStack functionality. It evaluates

the configured GSLB methods or algorithms to select a GSLB service to

which to send the client requests. One or more virtual servers from

different zones are bound to the GSLB virtual server. GSLB virtual

server does not have a public IP associated with it, instead it will

have a FQDN DNS name.

- Load Balancing or Content Switching Virtual Servers: According to

Citrix NetScaler terminology, a load balancing or content switching

virtual server represents one or many servers on the local network.

Clients send their requests to the load balancing or content

switching virtual server’s virtual IP (VIP) address, and the virtual

server balances the load across the local servers. After a GSLB

virtual server selects a GSLB service representing either a local or

a remote load balancing or content switching virtual server, the

client sends the request to that virtual server’s VIP address.

- DNS VIPs: DNS virtual IP represents a load balancing DNS virtual

server on the GSLB service provider. The DNS requests for domains for

which the GSLB service provider is authoritative can be sent to a DNS

VIP.

- Authoritative DNS: ADNS (Authoritative Domain Name Server) is a

service that provides actual answer to DNS queries, such as web site

IP address. In a GSLB environment, an ADNS service responds only to

DNS requests for domains for which the GSLB service provider is

authoritative. When an ADNS service is configured, the service

provider owns that IP address and advertises it. When you create an

ADNS service, the NetScaler responds to DNS queries on the configured

ADNS service IP and port.

How Does GSLB Works in CloudStack?

Global server load balancing is used to manage the traffic flow to a web

site hosted on two separate zones that ideally are in different

geographic locations. The following is an illustration of how GLSB

functionality is provided in CloudStack: An organization, xyztelco, has

set up a public cloud that spans two zones, Zone-1 and Zone-2, across

geographically separated data centers that are managed by CloudStack.

Tenant-A of the cloud launches a highly available solution by using

xyztelco cloud. For that purpose, they launch two instances each in both

the zones: VM1 and VM2 in Zone-1 and VM5 and VM6 in Zone-2. Tenant-A

acquires a public IP, IP-1 in Zone-1, and configures a load balancer

rule to load balance the traffic between VM1 and VM2 instances.

CloudStack orchestrates setting up a virtual server on the LB service

provider in Zone-1. Virtual server 1 that is set up on the LB service

provider in Zone-1 represents a publicly accessible virtual server that

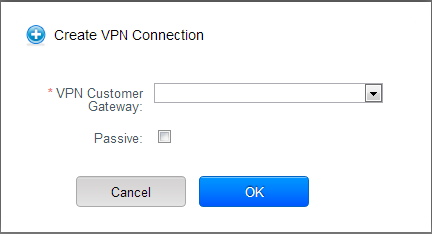

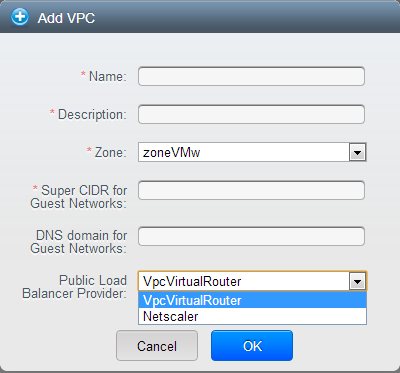

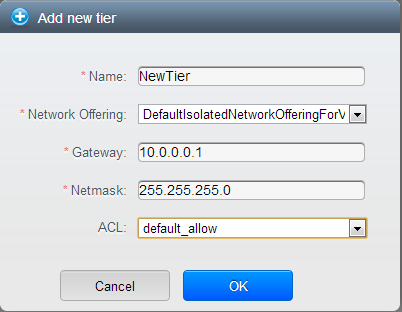

client reaches at IP-1. The client traffic to virtual server 1 at IP-1